We’re making positive changes with ethical AI

As artificial intelligence becomes more ubiquitous—in airports to recognize passengers’ faces, in cars to help drive us on crowded streets, and on websites to track what articles and ads we read—more people than ever are worried about AI’s integration into daily life. In 2019, the New York Times summarized these fears with the headline: “Is Ethical A.I. Even Possible?” We say ethical AI is possible—because it’s already happening on the Wikimedia projects.

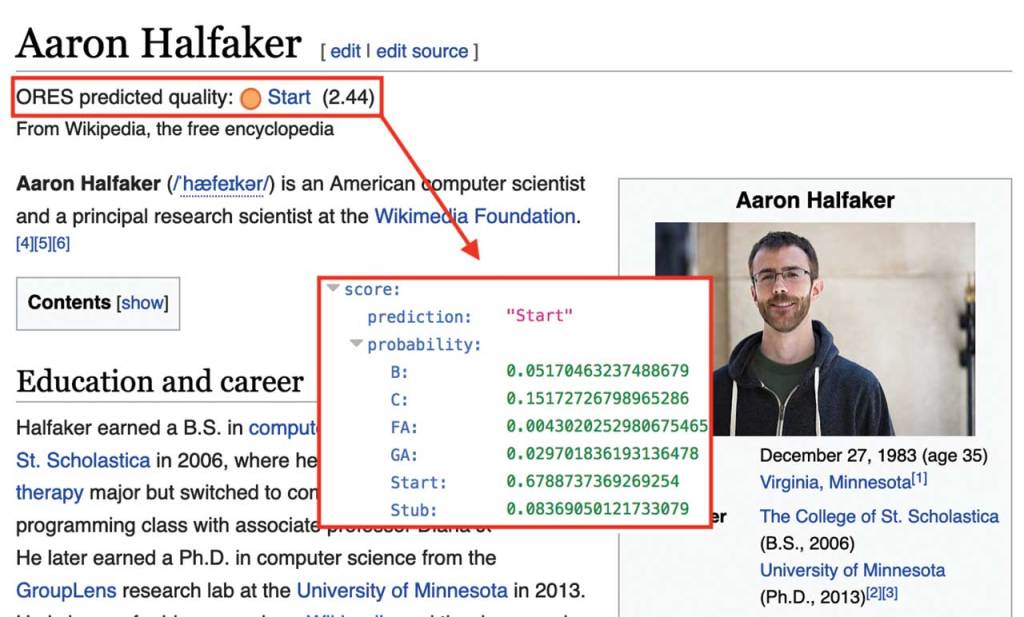

In the past year, volunteers worked closely with our AI service, ORES, to enhance how the service is improving Wikipedias in seven languages: Basque, Czech, Hungarian, Finnish, Serbian, Dutch, and Hebrew.

We’re modeling a different way to use AI. Many corporations will safeguard their algorithms to keep them proprietary and protect intellectual property. Commercial interests also drive their decisions. Wikimedia is creating AI-related software and tools that are open source, transparent in operation, and in dialogue with a community that tells us what works and what doesn’t.

In April 2019, we released a white paper, Ethical & Human-centered AI at Wikimedia, that presents risk scenarios moving forward, and improvements we can make to the process we follow in developing AI-powered products—and to the products’ designs.

Employing ethical AI makes an impact both “behind the scenes” and for everyone to see. It helps Wikimedia staff make tools and software that are rooted in our values. It helps volunteers make edits that improve Wikipedia articles and improve our AI’s functionality. And ethical AI helps us stay focused on what matters: Expanding the Wikimedia projects with more knowledge and with firm principles of fairness.

Photo credits

Could algorithmically-generated section recommendations inadvertently increase gender bias in biographies of women? (risk scenario A: Reinforcing existing bias.

Unknown

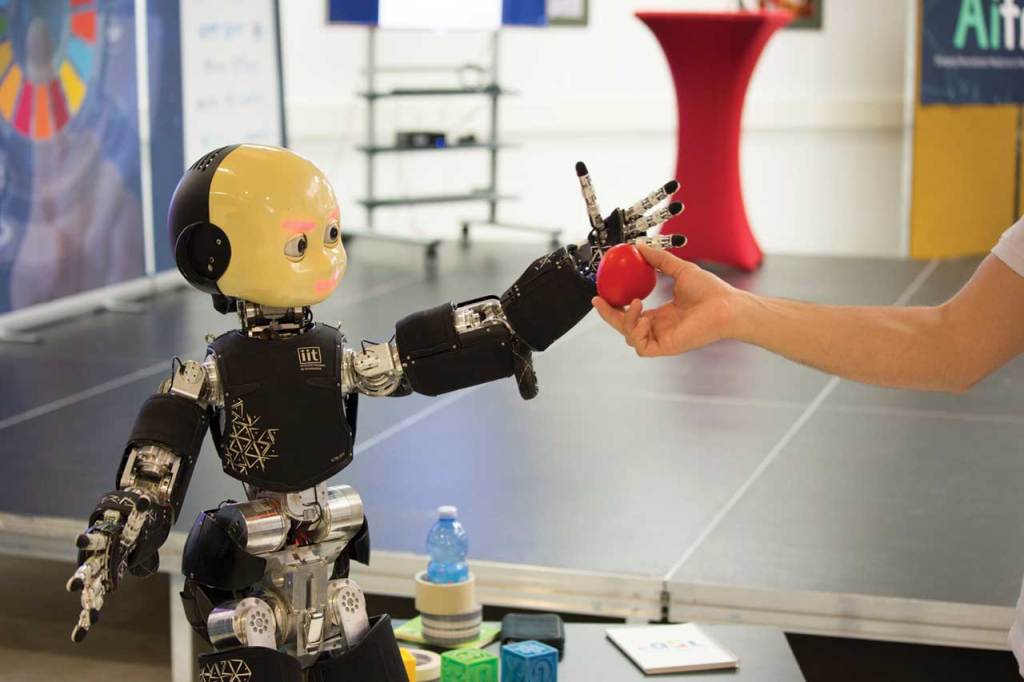

AI for Good Global Summit 2018 15-17 May 2018

Geneva ©ITU/D.Procofieff ITU (International Telecommunication Union